AI Rabbit Holes

Exploring Simulation Theory with an On-Device LLM

Apple is said to be following a different AI strategy than other corporations. Rather than focusing on AI inference in the cloud, where there are privacy and data concerns, they are focusing on how they can make AI work on their powerful Apple Silicon platform, on-device.

To be fair, they’ve always been fashionably late to the party when it comes to new technology, preferring to perfect rather than innovate, and this has worked well for them in the past.

But this seems different somehow. They are betting edge-based AI will become good enough to do powerful work without needing to make API calls.

Many will debate this. I decided to put it to the test.

Enter LLaMA 3.2

I had been chomping at the bit to test an on-device LLM in a production setting. Like many devs, I’d been quietly waiting for Apple to give us direct access to their Foundation Model.

When this didn’t pan out in 2024, I began to wonder if an iPhone/iPad was even powerful enough to (or had enough memory) to run a decent LLM.

Then I came across a project which used a specific formatted quantized model that produced incredible results both in terms of tokens per second and the size of the model itself, and suddenly LLaMA 3.2 could be used on-device.

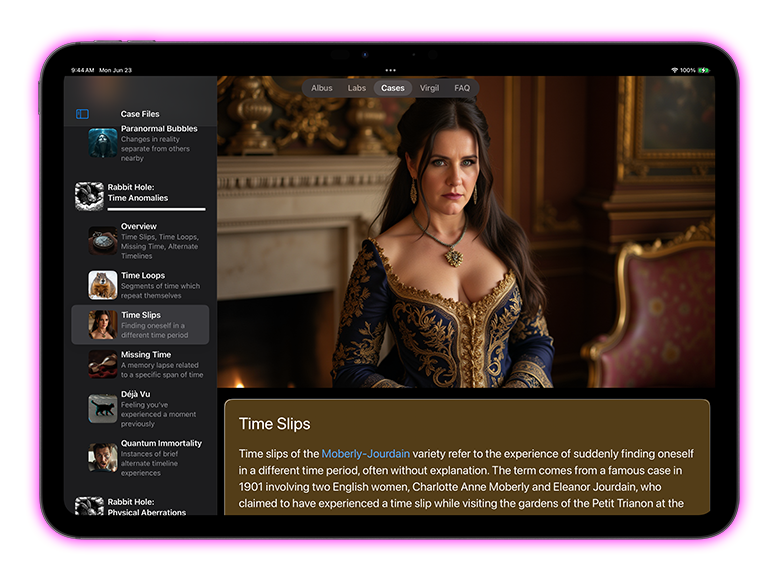

I decided to create a project which would showcase this new capability. I wondered how people would react to an on-device AI which was dedicated to entertainment: the exploration of Simulation Theory.

My business partner Jill Beitz and I set about exploring how AI could be used to explore every aspect of a speculative fictional topic.

App Specifications

When we started our project (with the working title “Simulation Rabbit Holes”), we set the following goals:

- We would use Frontier Models (Claude, ChatGPT) to explore different aspects of the topic. We would control the topic, the narrative, the context, and we would heavily edit all results, but the cases and scenarios all flow through these AI tools.

- We would create a chat experience with an on-device LLM which was true to the spirit of the content (the prospect of living in a simulation)

- All artwork would be AI generated, ideally using our own server running Flux Dev with ComfyUI.

Late in the project we also decided to add our likenesses to the app. (See Jill’s image below).

Custom LoRA

In order to match the style of our Stable Diffusion model (Flux Dev), a custom Low-Rank Adaptation (LoRA) would need to be trained on our likenesses.

After several decent attempts, I was able to key in on the important factors in producing a well-trained model which didn’t suffer from over-fitting.

It produced such a perfect likeness of Jill that Apple’s facial identification features labeled new pictures of her in her photos collection (camera roll).

It was also still flexible enough to be applied to different prompted environments, artistic styles, and lighting conditions.

We were truly living in a simulation of sorts.

I also employed Apple’s SceneKit to create an RPG inspired, custom 3D chat interface using a rendered terminal.

Lessons/Take Aways

Overall I feel the app is successful in a number of ways:

- The chat output streams quite quickly and produces compelling answers while keeping in the spirit of the central premise. (Gaurdrails were successfully managed).

- The graphics I produced using ComfyUI/Flux are absolutely beautiful and highlight what can be done in-house.

- ChatGPT created some truly original thought experiments through proper prompting/editing.

- Our decision to adopt a glassmorphism interface coupled with SwiftUI seems prescient given Apple had not made the move to adopting glassmorphism while I was designing the UI.

Downsides:

- The quantized version of LLaMA 3.2 we ship adds a lot to the size of the binary. It’s hard to sell a 2 GB app.

- The unfortunate choice of the name of the app “Red Pill Rabbit Holes” was sadly too tied to the red pill manosphere movement instead of The Matrix reference, which was our intent. (A name change to the app is in the works).